Five big ideas to learn at Big Data Tech Warsaw 2020

Hello again in 2020. It’s a new year and the new, 6th edition of Big Data Tech Warsaw is coming soon! Save the date: 27th of February. We have put…

Read moreDynamoDB is a fully-managed NoSQL key-value database which delivers single-digit performance at any scale. However, to achieve this kind of performance, for non-trivial use cases, with huge scale and traffic you need to model your data carefully. In this respect, DynamoDB is not different from other NoSQL databases, where generally you need to “forget” your RDBMS training (e.g. normalization) and do it differently. One thing that stands out in DynamoDB is the focus on keeping as few tables as possible. AWS DynamoDB Docs (here) repeatedly state

As a general rule, you should maintain as few tables as possible in a DynamoDB application.

To better understand why that is (keeping few tables, ideally only one) and why it might be beneficial, let's briefly review the DynamoDB data model.

Table - DynamoDB borrows the nomenclature from RDBMS and, similarly to other database systems, stores data in tables. However, apart from the mandatory primary key, it is basically schemaless and in the same table you can store a collection of heterogeneous items.

Item - an item is an identifiable group of attributes (think of rows or records in other db systems).

Attribute - an attribute is a fundamental, named block of data. Data can be primitive, e.g. a number, a string, or a complex object, e.g. a map or list (think of columns or fields in other db systems).

Primary key

The only mandatory attribute in an item is a primary key which comes in two flavours

Whichever primary key scheme you use, it’s very important to select a good partition key, i.e. values should be distinct in respect to the number of rows and ideally should be accessed uniformly. You should avoid “hot partitions”, the partitions that are accessed overwhelmingly frequently. DynamoDB uses partition keys, as the name implies, to partition your data physically and to get the best performance it should spread workloads among all/most partitions. Otherwise performance might suffer.

In a nutshell, the primary key’s components play the following roles

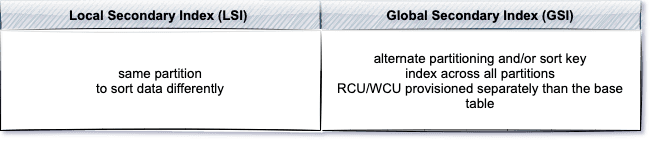

In addition to the base table, you can set up additional secondary indexes to increase queries performance. You’ve got two options - a Local Secondary Index (LSI) and a Global Secondary Index (GSI).

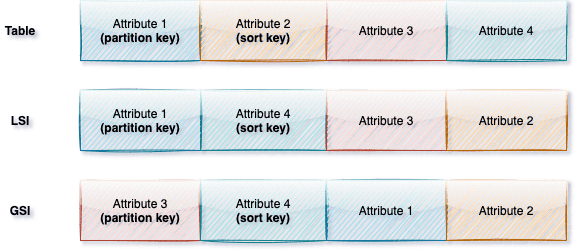

In summary, DynamoDB’s table structure looks as follows (different attribute projection types, i.e. all, include, keys only, for secondary indexes not included in the picture).

Modeling a DynamoDB database can take one of these two distinct approaches. A multi-table design which resembles RDBMS modelling (just with more constraints) which stores entities in separate tables, or a single table design where all entities are stored in one common table.

The most straightforward way to design your DynamoDB data model is to follow the best practices used in the RDBMS world, i.e. entity per table and some degree of normalization (the schemaless nature of DynamoDB and support for complex attributes gives you more options to express 1-to-* relationships, e.g. think about Postgresql with Json data types). Translating a RDBMS model to NoSQL without changes would also result in many tables. However, non-existing table joins in DynamoDB would have to be translated into multiple requests to the database. This approach incurs several costs at scale, i.e.

The most important and the biggest cost you pay with the multi-table design is the need for multiple network trips to fetch joined entities. It obviously can impact the performance and often it cannot be mitigated with parallel calls. Multiple serial requests don’t scale and, as you scale, your application gets slower. Lets see how you can remedy this with a very different approach - using only one table.

The main benefit of joins in relational databases is the ability to fetch heterogeneous items with a single request. However, joins are notoriously hard to scale and DynamoDB purposely doesn't offer any ability to join tables. Instead, in order to deliver fast and consistent performance, DynamoDB prompts developers to simply pre-join data into item collections which you can fetch with just a single request.

An item collection is a group of items, in a table or in an index, that shares the same partition key. Given the flexible data model of a DynamoDB table, an item collection can consist of multiple heterogeneous items. This simple concept, along with composite keys and secondary indexes, lets you model all typical entity relationships, i.e., one-to-one, one-to-many, many-to-one and many-to-many. A simple example should demonstrate this much better.

The goal here is to present the concept and not to delve much into a particular use case, so let's focus on a simple IMDB-like scenario. We’ve got lists of movies and actors. It’s a many-to-many relationship: movies cast many actors, actors play in many movies. We’ll start with a standard ER (Entity-Relationship) diagram first, before matching it to any particular database schema. In our simplified example we’ve got just two entities: actors and movies, and the ERD is very simple.

Single table design is access patterns-driven. Once you’ve found entities and relationships you need to think about access patterns, what aggregations there are, what you’re reading or writing and which use cases you want to optimise (but beware of the premature optimisation. Also keep in mind that sometimes things are easier to aggregate or filter out on the client/application side, so it’s not always the best approach to complicate things in the database engine - a good candidate for this method is when filtering is hard and the dataset is small).

Let’s list some basic, arbitrary access patterns we would like to support

Additionally, to make things a little bit more interesting and complicated, let’s assume that we would also like to display most streamed movies.

From the access patterns listed above we can see that some are quite trivial, e.g., getting an entity by ID requirement is a standard key-value access pattern where NoSQL databases excel. Others require more thought, e.g., listing the cast of a movie is a one-to-many relationship and it could be easily done with a partition key + sort key duo. But how would we fit the relationship back in the same table (getting movies by an actor)?

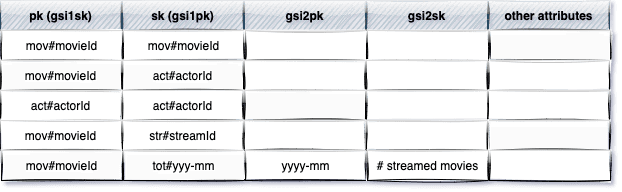

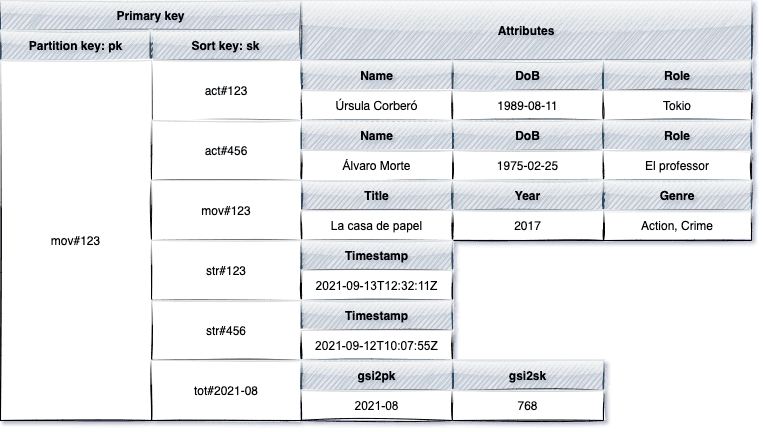

One possible design is presented below:

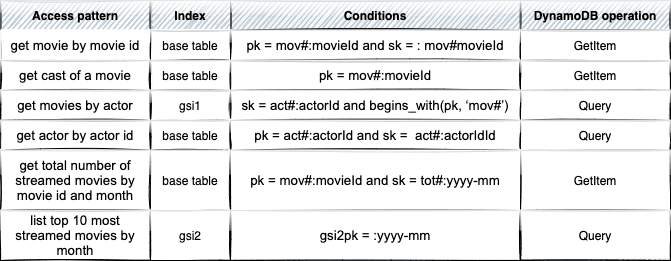

The design above translates into the access patterns as follows:

To keep things relatively simple and short the number of access patterns is not substantial. In real-world applications it’s not uncommon to handle dozens or even a few dozen access patterns with just the base table and a few secondary indexes. Even with this small design, however, there are few things worth mentioning.

First and foremost, when querying for the cast of a movie, we only use the partition key portion of the primary key. This way, we fetch all data related to the given movie with a single request to the database (item collection). So, not only do we get actors, but we also get movie details, streaming totals per month and individual streams (of course we could also add a condition on the sort key, e.g.begins_with(sk, ‘act#’), to get actors only).

Retrieving multiple, heterogeneous items in a single request is the most important benefit of the Single Table Design and the main reason you might consider using it in performance critical use cases! Unlike a relational database with many entities and joins among them, this pre-join access pattern represents constant time data retrieval at scale.

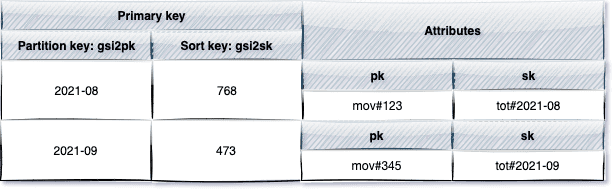

Secondly, you can see that attributes denoting primary keys have very generic names, e.g. pk, sk. It’s just a convention, but having specific attribute names, e.g. MovieId, wouldn’t make much sense. After all, we store different items in the same table. Storing heterogeneous items in one table is an example of partition overloading (a common practice is creating an attribute, i.e. entityType, with human readable values like movie, actor. It makes it easier to understand your PKs and SKs and can also be used in implementation to identify and process these entities). Similarly, you can design your secondary indexes with the overloading approach. Although currently there is only one use case for gsi2pk and gsi2sk, naming it that way will make it less confusing in the future when you could reuse this index with different items and this way take advantage of index overloading.

You can also see that key values are prefixed with some constants, e.g. mov#, act# etc. It lets you distinguish entity types and filter them out.

Note: Another common practice is to have hierarchical sort keys, e.g., the following form country#region#city#zipCode#street lets you query entities at any level of the hierarchy.

Thirdly, we’ve used a reversed secondary index, GSI-1, to model the many-to-many relationship between movies and actors. One leg of this relationship is accomplished by the partition key and the sort key of the base table. The relationship back uses the same attributes, only in reverse order.

Fourthly, the second secondary index, GSI-2, is an example of a sparse index, i.e. an index where sort key does not appear in every item. Sparse indexes are used to query a small subset of the main table (in our example, just totals). You might provision them with a lower write throughput than the base table, not affecting the performance.

A typical usage of sparse indexes is only listing some flagged entities, e.g. all drafts. In our example we could extend our requirements with the following

One way to do it would be to introduce a third secondary index with the partition key on pk and the sort key on a new actor’s attribute, e.g. oscar. The oscar attribute could be just a boolean, but we could also benefit from the sorting capabilities of the...uh..sort key and we there could store a date when the actor was awarded, and thus the list would be sorted by award date.

Another interesting fact about GSI-2 is that it contains aggregations. DynamoDB does not offer much when it comes to aggregations. If you expect constructs like group by or a simple count(*), you’ll end up empty-handed. However, DynamoDB does provide a very nice feature, i.e., DynamoDB Streams. By enabling this feature and attaching a lambda function to the stream inserts, updates and deletions made on the table will trigger the lambda function which in turn can aggregate, group or calculate new data and insert or update an item in the table.

Let’s reiterate the steps you should take when modelling a single table for your application (in fact, this should be quite familiar if you’ve worked with NoSQL databases)

The need, the complexity and all the hassle required to achieve Single Table Design is motivated by one thing - reducing the number of requests for an access pattern, which usually translates to a better performance at scale. That’s the key goal of Single Table Design (prior to On-demand capacity mode in DynamoDB having just one single table could also save you some WCUs and RCUs, i.e., money. But savings would be marginal and the new On-demand capacity mode invalidates it anyway).

Would you use Single Table Design everywhere, for every use case? Definitely not. Single Table Design is not a silver bullet, obviously. You trade off incredible scalability and performance for other characteristics which, for many use cases, might be more important.

One of the implicit costs you pay with the single table design is the complexity of this model in any non-trivial applications. At first, it might look contradictory. Don’t you have just one table to worry about versus multiple tables? Well, yes, there is only one table. However, index/partition overloading, heterogeneous items, prefixed key values and the prevalence of generic names for primary keys (e.g. pk and sk) in the base table and secondary indexes (e.g. gs2pk, gs2sk etc.) demand from developers a huge cognitive load (and it’s getting worse when adding new indexes). You trade code readability/maintainability for performance.

The resulting data model is

It requires

This one is not specific to DynamoDB nor Single table design. It’s a more general issue when following access patterns-first approach in the NoSQL world. Use cases and requirements change and it’s very likely that when new requirements come your carefully crafted model will not fit them. That’s not to say that Single Table design is static - it evolves and changes with the application as any model does. Sometimes data migrations or introducing yet another secondary index might be required. It is doable, but with a single table it’s more difficult to accommodate new access patterns.

A general recommendation for complex aggregations is to use DynamoDB Streams and a lambda function. This lambda function could write back or update the table in DynamoDB or send data to another storage, e.g. data lake for some advanced analytics. Single table design makes it slightly more complicated to implement some common use cases like Change Data Capture (CDC) or data export for analytics. There are some ready-to-use tools and libraries for that, but they usually expect receiving a collection of homogenous entities. With a single table you would typically need to filter and unwrap records with attribute renaming along the way.

There are some cases where you definitely wouldn't want to start with the Single Table Design.

Single Table Design is an interesting approach. It’s particularly applicable to large scale, performance critical applications. But you should also remember that the performance gain is not a free lunch. Improvements for specific access patterns could potentially detorierate other use cases and could increase application complexity and maintenance costs. You also don’t have to buy into this approach 100% of the time. Single table design and multi table design are not mutually exclusive. You could have, for instance, multiple tables and only apply single table design techniques in performance critical parts of the application.

Hello again in 2020. It’s a new year and the new, 6th edition of Big Data Tech Warsaw is coming soon! Save the date: 27th of February. We have put…

Read moreApache NiFi, a big data processing engine with graphical WebUI, was created to give non-programmers the ability to swiftly and codelessly create data…

Read moreCan you build a data platform that offers scalability, reliability, ease of management and deployment that will allow multiple teams to work in…

Read moreStreamlining ML Development: The Impact of Low-Code Platforms Time is often a critical factor that can make or break the success of any endeavor. In…

Read moreOur recently released white paper, "Data Democratization Through Data Management" offers an in-depth exploration of the subject. This article will…

Read moreAbout In this White Paper we described use-cases in the aviation industry which are the most prominent examples of Big Data related implementations…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?