Combining Kedro and Streamlit to build a simple LLM-based Reading Assistant

Generative AI and Large Language Models are taking applied machine learning by storm, and there is no sign of a weakening of this trend. While it is…

Read moreIn a lot of business cases that we solve at Getindata when working with our clients, we need to analyze sessions: a series of related events of actors, to find correlations or patterns of the actors' behaviors . Some of the cases include:

This is generally speaking, wherever behavioral analytics are being applied.

For the use cases to be able to research and develop applications, we need to create test data for experimentation, functional tests, performance tests, demos and so on.

Randomly generated events produced by most data generators are not enough. The data that is produced is unrelated. There are no actor sessions. However, there are a few projects that have tried to tackle this problem. One of them is GitHub - Interana/eventsim which was sadly abandoned a few years ago.

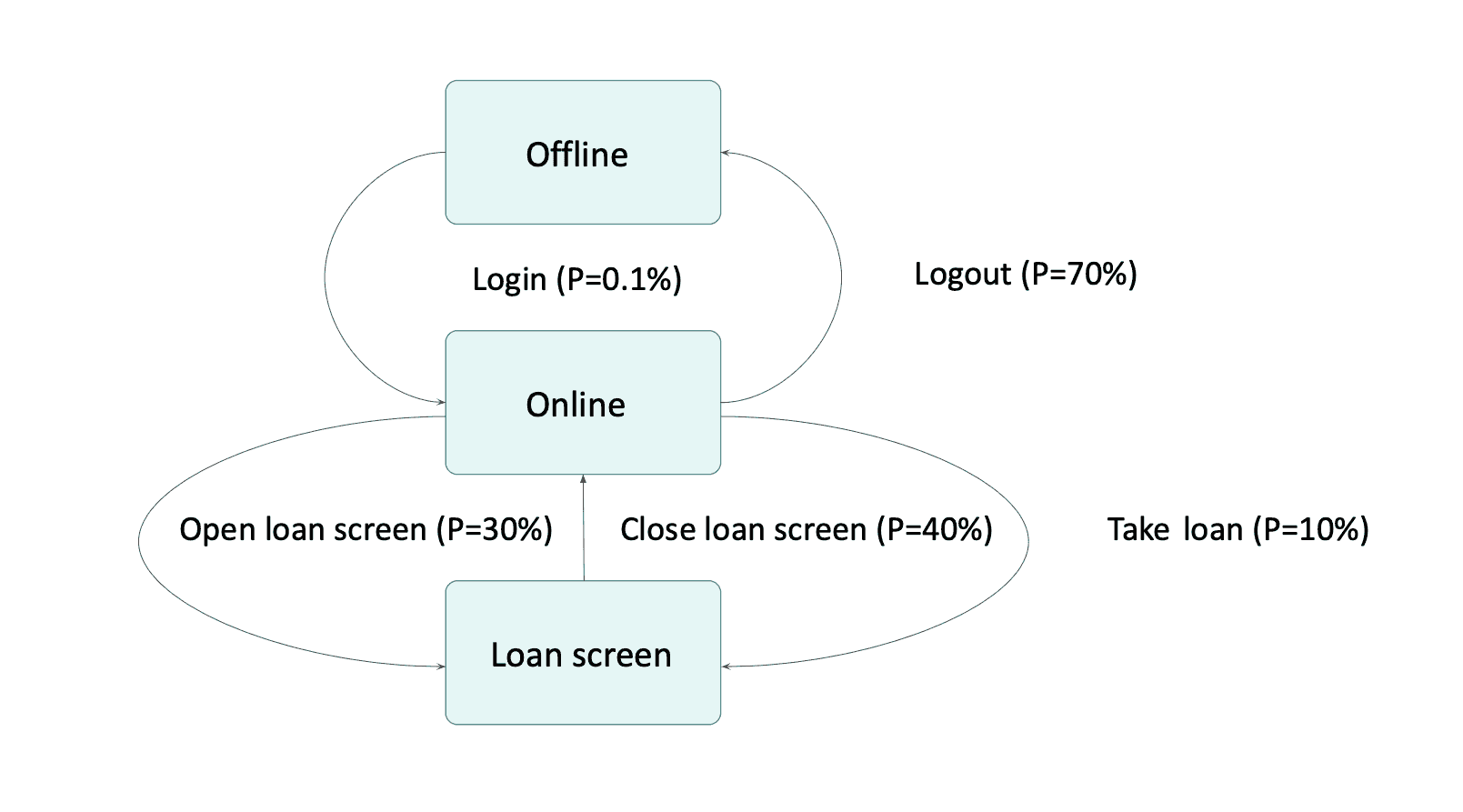

So, what can a solution that supports mentioned use cases look like? We can start with modeling actor behavior - what the actor can do and how it can evolve over time. Something like this can be expressed as a state machine.

There are still few things missing thou. For start we don't want to just have a state machine and then invoke transitions by writing lines of code, we would land back where we started. We want our state machine to transition automatically based on some probability.

Let's consider an example we are working on pattern recognition. We are trying to find banking application users that were thinking about taking a loan but did not took it. We are trying to target users with low balance that opened a loan screen and exited without taking a loan. Model of such interaction with application could look like that:

User can log into an application and when they are Online they can open a loan screen or exit application. From Loan screen they can ether take a loan or exit.

But what 0.1% probability mean? This is a probability that our state machine will automatically do that transition in each step. If we assign some real-world duration of a step, for example 1 minute. 0.1% probability would mean that on average user will log in once in 17 hours. Or from other perspective in each minute 1 of 1000 users will log in.

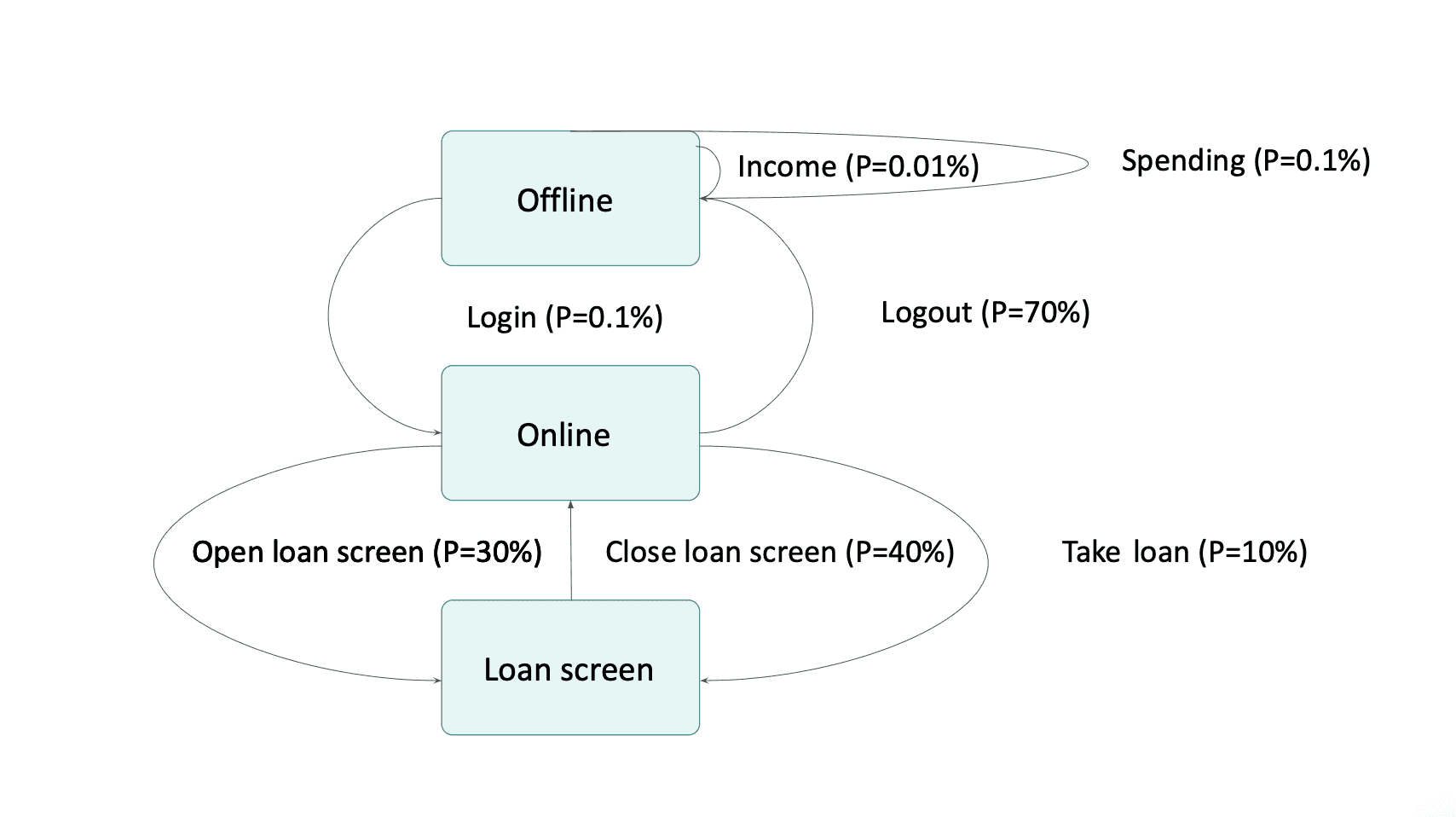

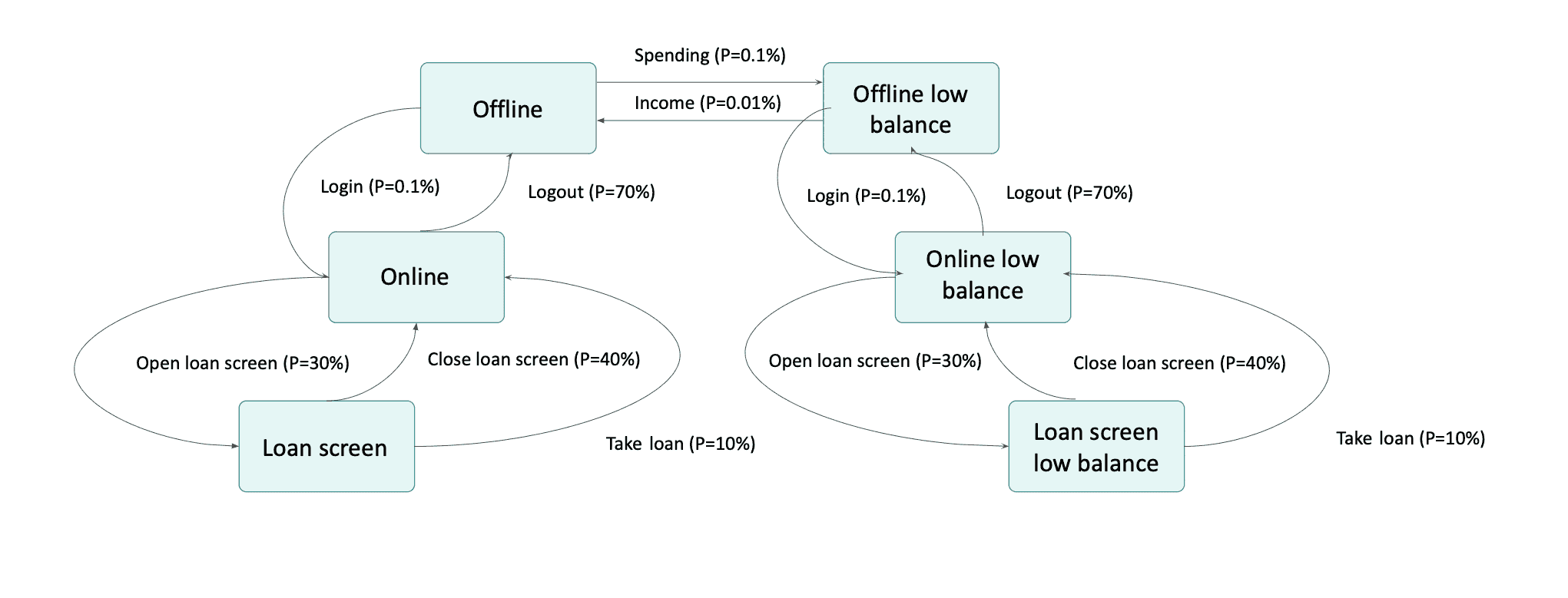

Using above we can generate click stream of user actions. But what about balance? It cannot be easily expressed in finite-state machine. We could think about adding new set of states Offline low balance, Online low balance,Loan Screen low balance but it looks like a duplication and it gets even more messy when we think about adding something additional like for example a loan balance.

Because balance does not affect set of possible actions it should not be modeled as a state. It is just an attribute of particular user that is traversing our state machine which value can be emitted when we are generating events during transitions. Using attributes we can go back to our simpler state machinebut this time enriched with new actions that won't be changing state but will change value of attributes. How can we generate data suitable for stream processing?

We have create a tool that can do all of the above Data Online Generator GitHub - getindata/doge-datagen it can be used to simulate user, system or other actor behavior bases on probabilistic model. It is a state machine which is traversed by multiple subjects automatically based on probability defined for each possible transition.

To express above state machine we need to define list of states, initial state, a factory to create initial state of users, number of users, tick length and number of ticks to simulate. Then we need to define each transition, its name, beginning and ending state, probability of transition and optional callback and sinks. Callback will be invoked during transition and allows for user attributes change. It can also be used to implement conditional transitions returning False will block state machine from transitioning. For example we can block Spending transition if balance is zero. Event sinks are used to generate events during a transition. Currently DOGE-datagen ships with sinks for Database and Kafka.

datagen = DataOnlineGenerator(['offline', 'online', 'loan_screen'], 'offline', UserFactory(), 10, 60000, 1000, 1644549708000)

datagen.add_transition('income', 'offline', 'offline', 0.01,

action_callback=income_callback, event_sinks=[balance_sink])

datagen.add_transition('spending', 'offline', 'offline', 0.1,

action_callback=spending_callback, event_sinks=[trx_sink, balance_sink])

datagen.add_transition('login', 'offline', 'online', 0.1, event_sinks=[clickstream_sink])

datagen.add_transition('logout', 'online', 'offline', 70, event_sinks=[])

datagen.add_transition('open_loan_screen ', 'online', 'loan_screen', 30, event_sinks=[clickstream_sink])

datagen.add_transition('close_loan_screen', 'loan_screen', 'online', 40, event_sinks=[clickstream_sink])

datagen.add_transition('take_loan', 'loan_screen', 'online', 10,

action_callback=take_loan_callback, event_sinks=[clickstream_sink, loan_sink, balance_sink])

datagen.start()Example output:

$ kafka-avro-console-consumer --topic clickstream --consumer.config ~/.confluent/local.conf --bootstrap-server localhost:9092 --from-beginning

{"user_id":"7","event":"login","timestamp":1644549768000}

{"user_id":"7","event":"open_loan_screen ","timestamp":1644549828000}

{"user_id":"7","event":"take_loan","timestamp":1644550008000}

{"user_id":"1","event":"login","timestamp":1644556668000}

{"user_id":"1","event":"open_loan_screen ","timestamp":1644556728000}

{"user_id":"1","event":"close_loan_screen","timestamp":1644556968000}

{"user_id":"4","event":"login","timestamp":1644559668000}

{"user_id":"4","event":"open_loan_screen ","timestamp":1644559728000}

{"user_id":"4","event":"close_loan_screen","timestamp":1644559788000}

$ kafka-avro-console-consumer --topic trx --consumer.config ~/.confluent/local.conf --bootstrap-server localhost:9092 --from-beginning

{"user_id":"8","amount":75,"timestamp":1644553008000}

{"user_id":"6","amount":47,"timestamp":1644558708000}

{"user_id":"3","amount":42,"timestamp":1644563808000}

{"user_id":"0","amount":85,"timestamp":1644583128000}

{"user_id":"2","amount":40,"timestamp":1644597228000}

{"user_id":"5","amount":18,"timestamp":1644601308000}

postgres=# select * from balance;

timestamp | user_id | balance

---------------+---------+---------

1644550008000 | 7 | 10933

1644553008000 | 8 | 409

1644558708000 | 6 | 320

1644563808000 | 3 | 577

1644583128000 | 0 | 259

1644597228000 | 2 | 697

1644601308000 | 5 | 702

postgres=# select * from loan;

timestamp | user_id | loan

---------------+---------+-------

1644550008000 | 7 | 10000Data Online Generator can be used not only during development and experimentation, but can also be a part of automated test pipelines. It is designed to be flexible to support many use cases and is also extendable and allows for easy implementation of new event sinks. Visit our GitHub, to see examples on how to work with the data online generator.

If you're interested in stream processing, check our CEP Platform.

—

Did you like this blog post? Check out our other blogs and sign up for our newsletter to stay up to date!

Generative AI and Large Language Models are taking applied machine learning by storm, and there is no sign of a weakening of this trend. While it is…

Read moreIn the first part of the series "Power of Big Data", I wrote about how Big Data can influence the development of marketing activities and how it can…

Read moreThe two recently announced acquisitions by Google and Salesforce in the thriving business analytics market appear to be strategic moves to remain…

Read moreIn the previous post on our Big Data Blog, we discussed the business reasons behind the failures of Big Data projects. We've listed five major…

Read moreThe rapid growth of electronic customer contact channels has led to an explosion of data, both financial and behavioral, generated in real-time. This…

Read moreWhile a lot of problems can be solved in batch, the stream processing approach can give you even more benefits. Today, we’ll discuss a real-world…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?