A Review of the Presentations at the Big Data Technology Warsaw Summit 2023

It has been almost a month since the 9th edition of the Big Data Technology Warsaw Summit. We were thrilled to have the opportunity to organize an…

Read moreMachine Learning is now used by thousands of businesses. Its ubiquity has helped to drive innovations that are increasingly difficult to predict, and build intelligent experiences for a businesses' products and services. While Machine Learning can be found everywhere, it also brings many challenges when it comes to actually implementing it. One of those challenges is being able to quickly and reliably move from the experimentation phase, where the Machine Learning models are developed, to the production phase, where models can be served in order to bring value to the business.

Industry offers many tools that address this challenge. Public cloud offerings have their own managed solutions for Machine Learning models serving and at the same time, there is a plethora of Open Source projects focused on that too. In this post, the first one in the series, we compare open source tools that run on Kubernetes, to help you decide which tool to use for your company's Machine Learning model serving.

We have focused our research on 9 main areas of model serving tools:

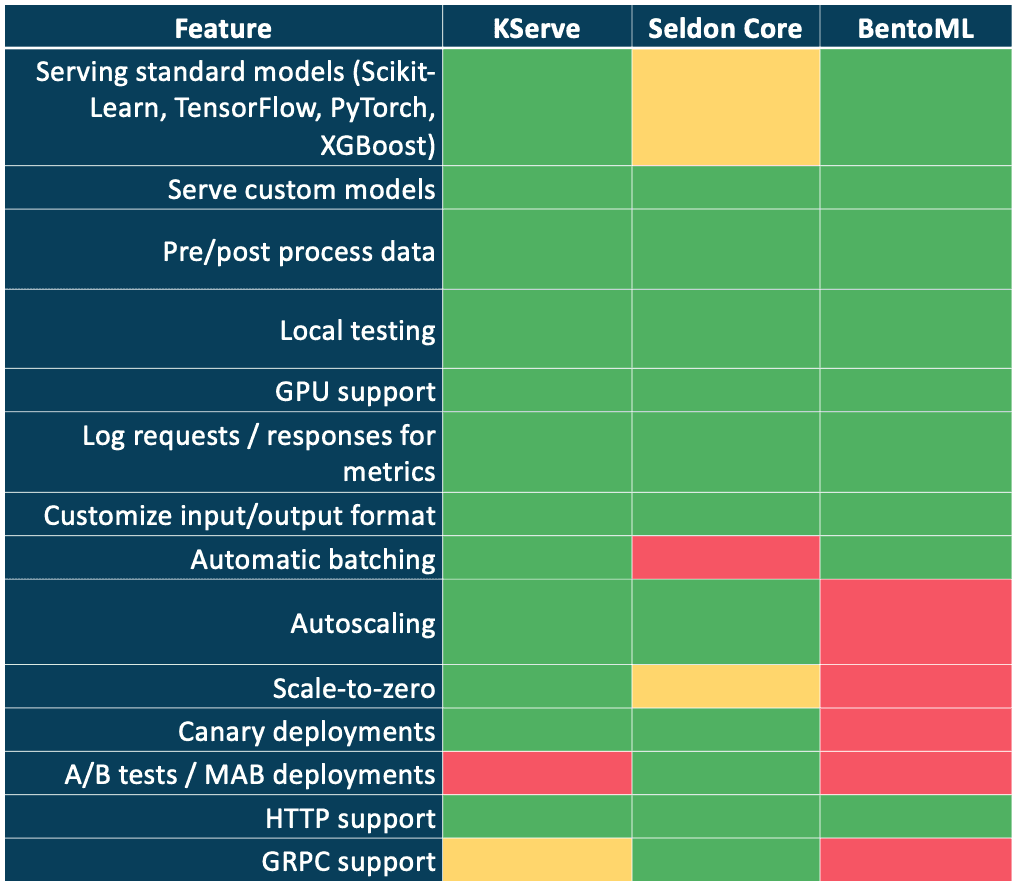

The tools we chose in this post for comparison were: KServe, Seldon Core and BentoML. The next post will cover cloud-based, managed serving tools.

In order to compare the tools, we set up a ML project which included a standard pipeline, involving: data loading, data pre-processing, dataset splitting and regression model training and testing. The pipeline required the model inference to include a pre-processing step (invoking custom Python function), so that different aspects of the serving tools could be tested. The pipeline itself allowed to swap the model easily, so various modeling frameworks could be used.

KServe (previously, before the 0.7 version was named KFServing) is an open-source, Kubernetes-based tool providing custom abstraction (Kubernetes Custom Resource Definition) to define Machine Learning model serving capabilities. It’s main focus is to hide the underlying complexity of such deployments so that it’s users only need to focus on the ML-related parts. It supports many advanced features such as autoscaling, scaling-to-zero, canary deployments, automatic request batching as well as many popular ML frameworks out-of-the-box. It’s used by companies such as Bloomberg, NVIDIA, Samsung SDS, Cisco.

Seldon Core is an open source tool developed by Seldon Technologies Ltd, as a building block of the larger (paid) Seldon Deploy solution. It’s similar to KServe in terms of the approach - it provides high level Kubernetes CRD and supports canary deployments, A/B testing as well as Multi-Armed-Bandit deployments.

BentoML is a Python framework for wrapping the machine learning models into deployable services. It provides a simple object-oriented interface for packaging ML models and creating HTTP(s) services for them. BentoML offers in-depth integration with popular ML frameworks, so that all of the complexity related to packaging the models and their dependencies is hidden. BentoML-packaged models can be deployed in many runtimes, which include plain Kubernetes Clusters, Seldon Core, KServe, Knative as well as cloud-managed, serverless solutions like AWS Lambda, Azure Functions or Google Cloud Run.Detailed comparison

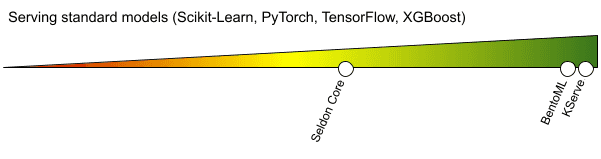

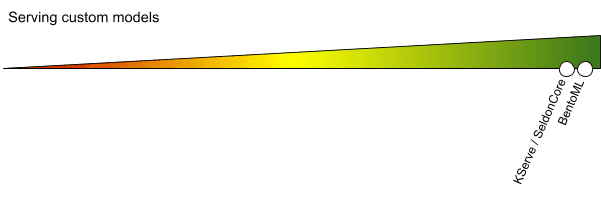

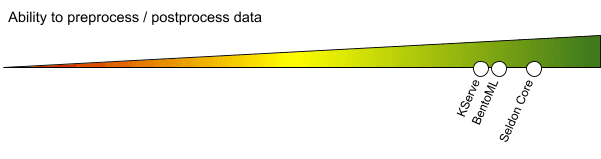

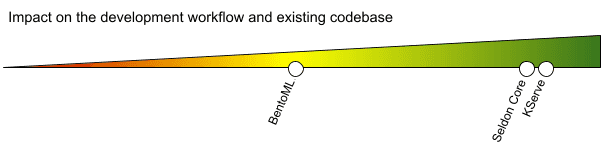

The comparison includes the description of each area within each tool as well as the visualization of how well the tool addresses it. The scale is subjective, based on the effort required to achieve the given goal, the more to the right (green area), the better the tool addresses the aspect.

This area of comparison was focused on the tools' abilities to serve models trained in one of the popular frameworks, including: Scikit-Learn, PyTorch, TensorFlow and XGBoost.

All of the tested frameworks are fairly easy to serve. Standard frameworks are a first class citizen in KServe, as it provides pre-build docker images for running them as well as direct definition in the InferenceService (custom resource for Kubernetes defined by KServe).

Usually, a config file needs to be prepared in order to properly launch the models.

Seldon Core can easily serve Scikit-Learn, XGBoost and TensorFlow models. There is no built-in support for PyTorch, which could be achieved via Triton Server, but requires a lot of additional effort and requires the use of Seldon’s v2 protocol. Using v2 protocol is also enforced when using MLServer (the new recommended way to deploy models using Seldon Core), this results in some challenges downstream - see the section about preprocessing/postprocessing below.

Using BentoML boils down to implementing a custom Python class, which inherits from the framework’s class, which as a result means that any Python framework can be used. There is built-in support for all of the standard frameworks which handles model serialization, deserialization, dependencies as well as input/output handling. It’s really easy to implement BentoML’s BentoService class interface, which usually fits within a few lines of code.

A DataScientist'swork must not be limited by the set of frameworks used. It’s important for the serving solution to support any custom framework and code.

KServe allows the use of any docker image as part of the deployment, so basically any framework/code/language (to some extent) can be used. The tool provides Python SDK with an abstract class (KFModel) that can be inherited in order to make integration of the custom code easier.

Similarly to KServe, any docker image can be used. The difference between Seldon Core and KServe in this area is that while KServe provides SDK with classes that must be implemented, Seldon provides SDK with classes that can be implemented (SeldonComponent), but one can also opt-in to Python’s duck-typing.

As usage of BentoML requires implementing Python code, any customization can be done with it.

Real world Machine Learning models usually require the input data to be preprocessed in some way, either to extract features, normalize the values or transform the data. It’s crucial for the model serving tools to provide a way of plugging in pre/post processing of the data, before/after it reaches the model.

The InferenceService abstraction in KServe allows the specification of a transformer, which can handle both pre and post processing of the data. Implementation requires preparing a custom docker image with a class inherited from KServe’s SDK, similarly to implementing custom models.

Besides the standard pre and post processing, which can be defined as the TRANSFORMER and implemented as Python class (with inheritance or duck-typing, see “Serving custom models”), Seldon Core offers abstraction of inference graphs. This may include not only data transformations but also custom ROUTER (e.g. dynamically deciding to which of the many models, being a part of the same SeldonDeployment, to send the data), as well as COMBINER which allows you to create an ensemble of models directly from within the deployment. Thanks to this functionality, Multi-Armed-Bandit deployments are easily achievable. One must keep in mind that when MLServer or Triton Server are used, transformations will not be possible - see a relevant GitHub issue https://github.com/SeldonIO/MLServer/issues/287 .

As in the previous areas, any code can be run as part of BentoML deployment.

Here we focus on whether using the tools requires changes in the development workflow (e.g. adjusting to a new set of APIs, making some changes in the existing CI/CD setup, modifying the training code and using new artifacts storage for models etc.).

KServe integrates well with both existing DevOps pipelines for deployment (whether directly from Kubernetes manifests, Helm Charts or other) as the deployment requires simple resource definition. From the Data Scientists/Machine Learning Engineers perspective, the adjustments are rather minimal - models can be served from any cloud storage, like S3 or GCS. Existing CI/CD pipelines which build Docker images can be left intact. Changes to the Docker images themselves are optional and required only if a custom code needs to be launched.

Similarly to KServe, Seldon Core does not impact the existing DevOps/Software Engineering workflows. Deployments are performed from Kubernetes manifests. As long as one of the supported frameworks is used, minimal effort from the Data Scientists / Machine Learning Engineers is needed, however any customization or using non-standard frameworks might complicate the workflow and some of the features might become unavailable (due to being not implemented yet, see “Ability to preprocess / postprocess data”).

Although implementing Python classes is not a difficult task, the process of delivering BentoML based services to the execution environment (e.g. a Kubernetes cluster), will require changes in the CI/CD pipeline. BentoML saves the BentoService-inherited class with a serialized model, Python code and all of the dependencies into a separate archive/directory. The archive contains a Dockerfile, which allows you to build a standalone serving container image. Because the BentoML archive is created as an artifact, the CI/CD pipeline needs to consume it and trigger another build. From the deployment perspective, everything needs to be handled manually, which in the case of Kubernetes means writing Deployment definitions.

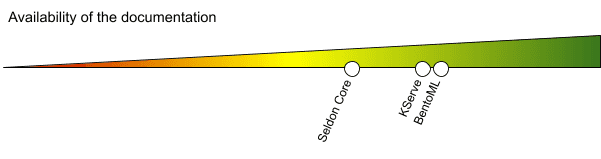

Quick adaptation of the serving tools is only possible if good documentation is provided. Community support via GitHub/Slack was also considered.

Documentation covers the important aspects. Non-trivial use-cases require researching through the GitHub issues or asking others on a Slack channel, where the community is quite active.

Documentation covers mostly trivial use-cases, a lot of links lead to 404 pages. Advanced scenarios can be found on GitHub, but some of them are deprecated.

Fairly robust documentation with a lot of up-to-date examples. Both code and concepts are well described.

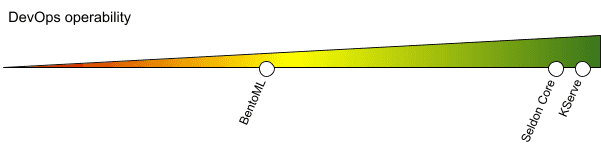

After the deployment, the DevOps teams are usually responsible for monitoring and maintaining the production applications. The model serving tools need to be accessible to DevOps, to allow for repeatable deployments, provide monitoring and ways to diagnose issues that might occur at runtime under high load.

The Stack of the KServe is based on well-established Open Source tools: KNative and Istio, which are Dev-Ops first, Kubernetes-native. Monitoring is based on widely adopted Prometheus. Deployments can be done using any Kubernetes-compatible solution, whether it’s directly from kubectl, Helm or Helmsman. Logging is easily configurable and the messages are usually descriptive. Canary deployments are available out-of-the-box.

Seldon only requires Istio or Ambassador to be available in order to operate. Monitoring is also done via Prometheus. Similarly to KServe, any Kubernetes deployment solution can be used. Logging can be easily configured, but for some parts there are no logs at all. Canary deployments as well as A/B test deployments are available out-of-the-box.

As BentoML is code-first, support for DevOps can be configured thanks to many integrations with tracing tools (e.g. Jaeger), monitoring (Prometheus). The configuration as well as deployment in Kubernetes requires manual implementation though. BentoML however, can be used with many existing serving solutions or even serveless services, as at the end of the day, the final result is a plain Docker image.

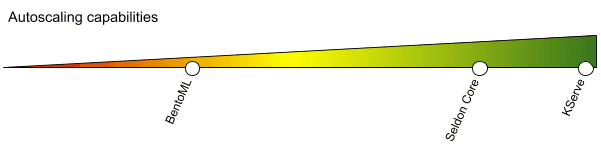

Deployed models should satisfy business needs not only when it comes to the quality of their predictions, but also for their throughput. Serving solutions should allow models to scale up when the traffic spikes and scale down when it goes back to normal.

Thanks to the tight integration with KNative, KServe offers best-in-class autoscaling features. Deployed models can be scaled up not only by leveraging Kubernetes’ CPU utilization metric, but also by high level metrics such as requests per second or concurrency (how many requests can be simultaneously processed by a single container with the model). KServe also offers scale-to-zero with rapid activation, which makes it easier to keep the overall costs of the cluster low. There is also built-in support for automatic request batching, which helps to utilize the pods’ resources better.

As Seldon Core is Kubernetes-native, a standard Horizontal Pod Autoscaler with metrics such as CPU and memory utilization can be used. Additional installation of KEDA and integration with it is required, if event based metrics are desired to be used. By integrating with KEDA, scale-to-zero becomes possible via KEDA-native event sources. HTTP scale-to-zero requires yet other add-ons for KEDA.

As BentoML is a code-first framework, it does not offer any autoscaling features as they are purely dependent on the chosen runtime (BentoML can be deployed to KServe, Seldon Core, SageMaker Endpoints and many other cloud solutions too). The framework however, supports automatic batching of the requests, which allows tuning the serving performance (to some extent) once deployed.

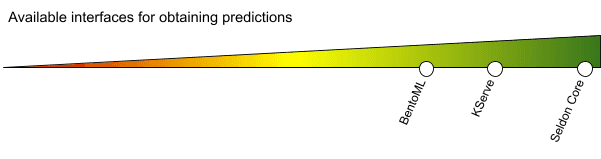

Usually models are served as HTTP(s)-based services with JSON input/output. Various use cases might require different requests/response formats or the use of faster, binary protocols such as GRPC, so the serving tools should also support them.

Although KServe does not impose a limit on the used protocols, the default serving method is HTTP-based. Non-json input/outputs require a custom transformer. The configuration allows the use of GRPC or any other protocol, but handling such protocols requires manual, custom implementation.

Similarly to KServe, Seldon Core does not limit the use of protocols. Moreover, it provides default implementations for both HTTP and GRPC serving methods, so that every deployed model can automatically respond to both HTTP as well as GRPC requests. Request and response formats can be handled via custom implementation in TRANSFORMER.

Only HTTP is supported (GRPC seems to be in a stale state https://github.com/bentoml/BentoML/issues/703 ). Again, as BentoML is code-first, handling any kind of a request is possible. The framework offers a few pre-implemented handling methods, so that requests can be parsed from CSVs, JSON and others.

Last but not least, this area of focus in the model serving tools comparison is focused around infrastructure management - it considers the effort required to operate the given tool in production and at scale.

Both of these tools fully rely on the underlying Kubernetes cluster for infrastructure management. If cloud-managed Kubernetes is used, the process could include the Infrastructure-as-a-Code approach. The tools themselves do not put a lot of pressure on the resource requirements when deployed in existing clusters.

BentoML relies on the chosen deployment target, so it’s not considered in this area as it might vary from low to high effort to operate.

Moving Machine Learning models from the experimentation phase into APIs running on production is a complex process. All of the compared tools try to make some of its aspects easier, faster or even effortless. At the same time, all of the tools have their downsides - that’s why it’s important to know what the different capabilities are of such tools, and what can be achieved with them given the project’s main goals and constraints. We hope that this comparison will help you to make well-informed decisions when it comes to serving Machine Learning models. In the next post we will cover cloud-native, managed machine learning serving tools. Stay tuned!

Interested in ML and MLOps solutions? How to improve ML processes and scale project deliverability? Watch our MLOps demo and sign up for a free consultation.

It has been almost a month since the 9th edition of the Big Data Technology Warsaw Summit. We were thrilled to have the opportunity to organize an…

Read moreApache NiFi, a big data processing engine with graphical WebUI, was created to give non-programmers the ability to swiftly and codelessly create data…

Read moreIn this blogpost series, we share takeaways from selected topics presented during the Big Data Tech Warsaw Summit ‘24. In the first part, which you…

Read moreIn this blog post, you will learn what medallion architecture is, the characteristics of each layer of this pattern and how it differs from the…

Read moreFeature Stores are becoming increasingly popular tools in the machine learning environment, serving to manage and share the features needed to build…

Read moreThe partnership empowers both to meet the growing global demand Xebia, the company at the forefront of digital transformation, today proudly announced…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?