Dynamic SQL processing with Apache Flink

In this blog post, I would like to cover the hidden possibilities of dynamic SQL processing using the current Flink implementation. I will showcase a…

Read more

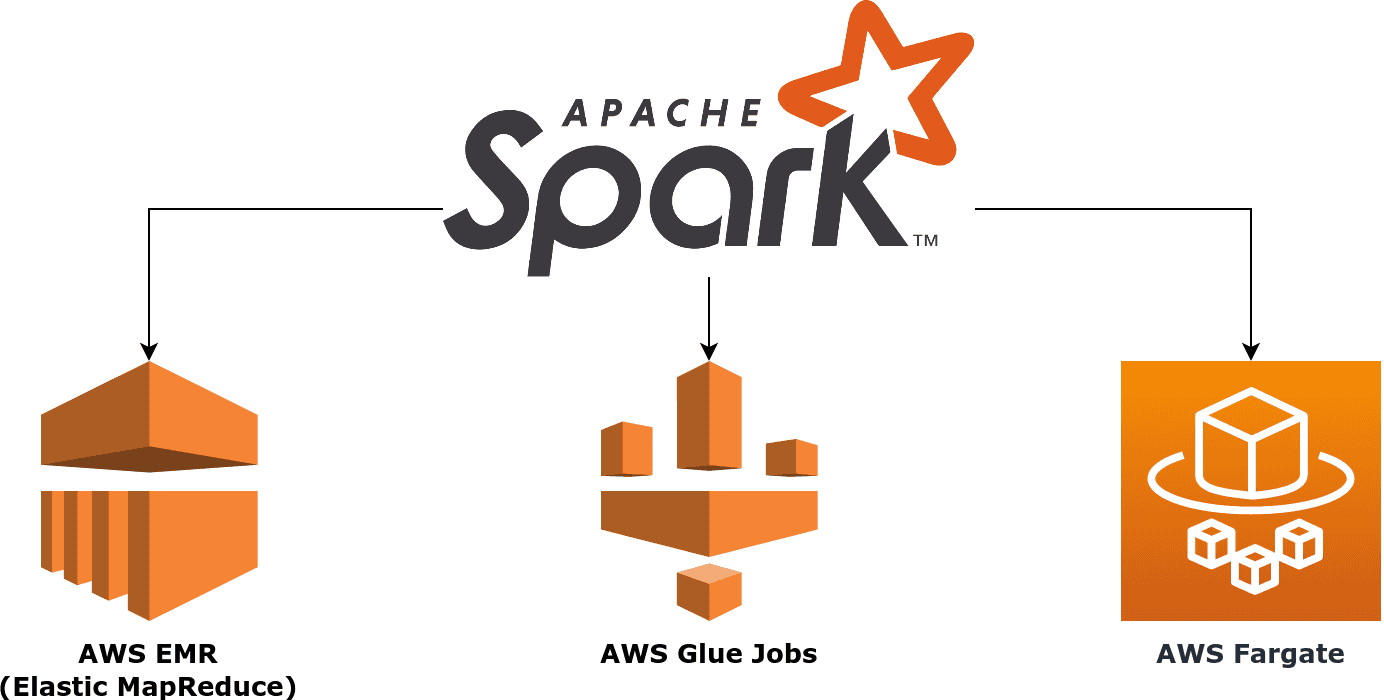

When you search thought the net looking for methods of running Apache Spark on AWS infrastructure you are most likely to be redirected to the documentation of AWS EMR (Elastic Map Reduce) service, which is Amazon's Hadoop distribution suited to run in AWS cloud environment. It's quite an easy way to deploy your data pipelines, but sometimes bootstrapping a huge cluster to perform simple ad-hoc analysis it's a cumbersome task. They say:

"to a man with a hammer everything looks like a nail" :)

and we felt into this trap with EMR once.

The article below describes two other ways of running Apache Spark jobs on AWS-managed infrastructure - AWS Glue and AWS Fargate - that we use on our clients' data warehousing projects. You will find there the key differences between these methods when it comes to flexibility and pricing, showing why there is no place for "one service fits all" approach in AWS world.

Check out!

In this blog post, I would like to cover the hidden possibilities of dynamic SQL processing using the current Flink implementation. I will showcase a…

Read moreWe are producing more and more geospatial data these days. Many companies struggle to analyze and process such data, and a lot of this data comes…

Read moreA year is definitely a long enough time to see new trends or technologies that get more traction. The Big Data landscape changes increasingly fast…

Read moreThis blog is based on the talk “Simplified Data Management and Process Scheduling in Hadoop” that we gave at the Big Data Technical Conference in…

Read moreMachine learning is becoming increasingly popular in many industries, from finance to marketing to healthcare. But let's face it, that doesn't mean ML…

Read moreAI regulatory initiatives of EU countries On April 21, 2021, the EU Commission adopted a proposal for a regulation on artificial intelligence…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?